[ad_1]

OpenAI’s latest language model, GPT-4, has been officially announced, but what can it achieve that its predecessors couldn’t? Is it really quite a revolution in artificial intelligence?

GPT-4the new OpenAI model, is undoubtedly a breakthrough in the field of language processing technology and the artificial intelligence. This great company announced it, there has been a lot of expectation with its launch for a few months and it finally seems that it has fulfilled.

Has the potential to become a truly capable tool for anyone and it only remains to wait to see applications that arise from this model and even an improved one ChatGPT.

The company has unveiled the powers of the language model on its blog saying that it is more creative and collaborative than ever. While ChatGPT —with technology GPT-3.5—only accepted text input, GPT-4 can also use images to generate captions and analysis.

But that’s just the tip of the iceberg. This does not end here and it is the beginning of a multitude of advantages, although it may also have some drawbacks.

What innovations does it integrate with respect to GPT-3?

Parameters: more is not synonymous with better

Regarding the differences with the previous models, it is necessary to emphasize the concept of “More power in a smaller scale”. OpenAI, As usual, it is very cautious when offering all the information and parameters used to train GPT-4 in this case.

At the moment it is known that GPT-3 has 175,000 million parameters and it is estimated that GPT-4 exceeds it, but not with a huge difference as has circulated on social networks.

Regarding the assumptions that many media make about the parameters with which GPT-4 is trained, OpenAI has already confirmed that this information is being saved in order to protect itself from the competition. In other words, he prefers not to say it to prevent other companies like Google that also develop large language models from having a reference to beat.

With tools like ChatGPT it has already been shown that the number of parameters is not everything, but the architecture, and the quality also play an important role in training.

“GPT-4 has many more parameters with which it has been trained and has received much more feedback from end users than GPT-3, which allows us to have a better understanding of the context throughout the conversation that we give it, being able to give more creativity to the answers and length”Josué Pérez Suay, specialist in Artificial Intelligence and ChatGPT, explains to Computer Hoy.

multimodal model

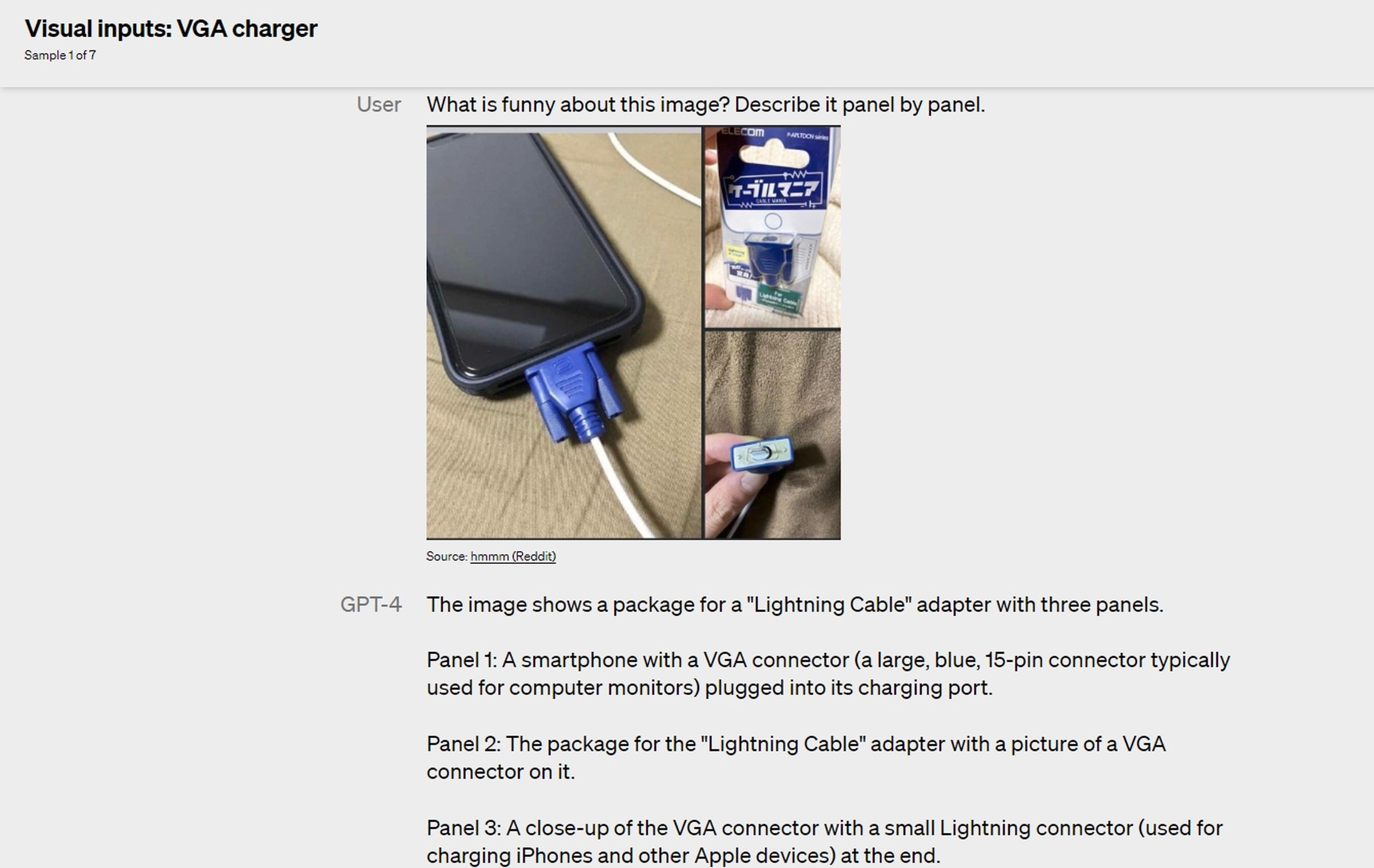

Another big difference to highlight and already announced by OpenAI is its multimodal nature. Instead of working solely with text, GPT-4 will also be able to accept images as input. That is, you can upload photos and ask GPT-4 to analyze them, to explain why the meme you show is funny, or to tell you what objects are in the image.

For example, they showed a picture with ingredients and asked what recipes could be made with them. At first she responded with the elements that appear in the image, she recognized them, and then she began to give a multitude of recipes. In the following image you can see another use case.

More lenguajes

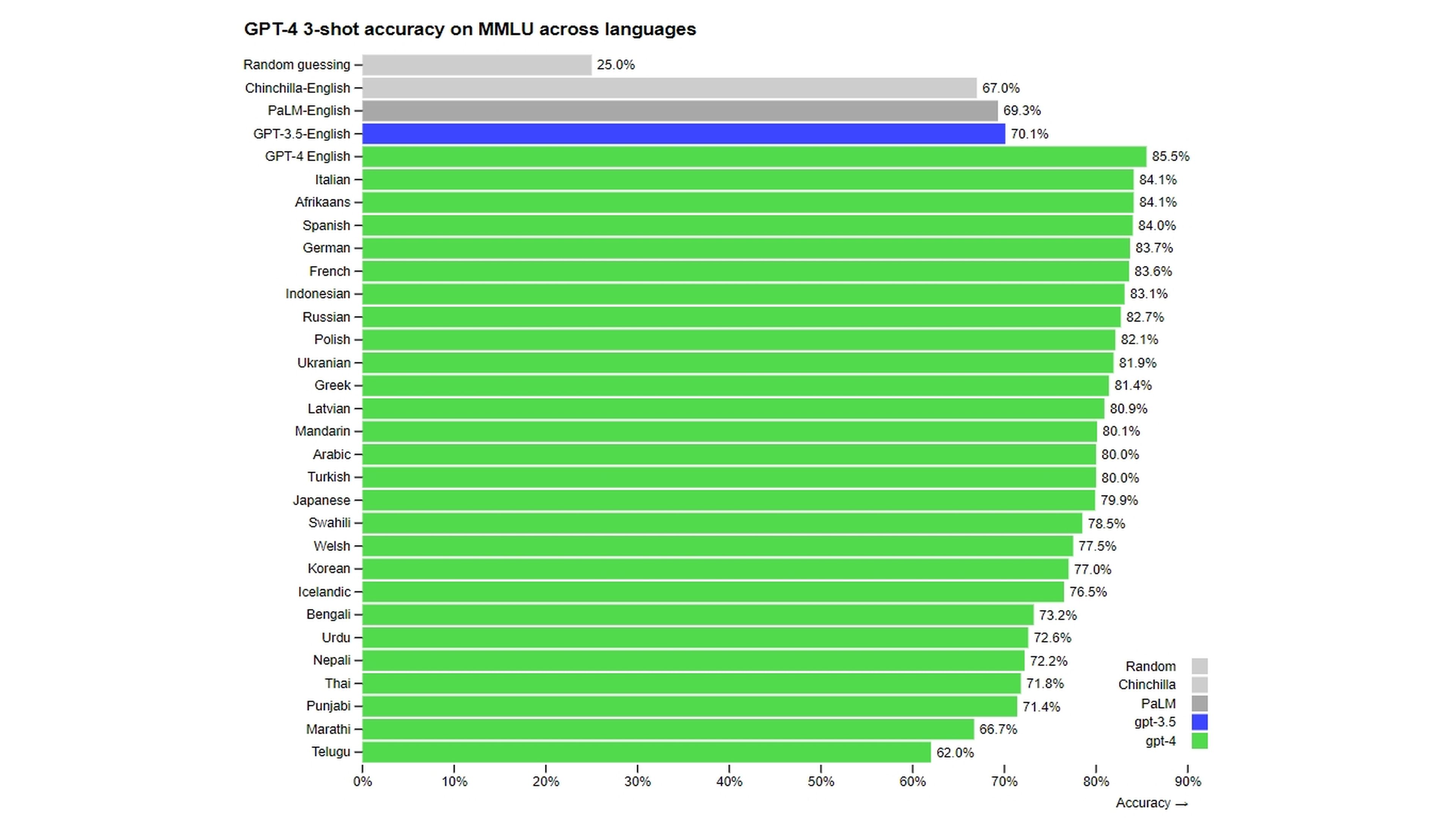

While English is still its first language, GPT-4 takes another big step forward with its multilingual capabilities. It is almost as accurate in Mandarin, Japanese, African, Indonesian, Russian, and other languages as it is in its native language. In fact, it is more accurate in Punjabi, Thai, Arabic, Welsh and Urdu than the English version 3.5.

Therefore, it is truly international and your apparent understanding of concepts combined with outstanding communication skills could make you a truly next level translation tool.

“To this we must add that internal optimization has reduced the computational and energy costs associated with the use of the model”explains Pérez Suay.

Improved RLHF although it still has limitations

Taking into account what they themselves comment in their report, they have placed special emphasis on using RLHF algorithms (Reinforcement Learning with Human Feedback).

This is a machine learning technique in which an AI system is trained using human feedback, rather than just using data with the aim of stopping the false information that tools based on these models sometimes provide.

OpenAI gave a group of experts early access to multiple versions of the GPT-4 model for some testing. They fixed the bugs in the responses and evaluated the ability of this model to even carry out phishing attacks.

“We spent 6 months making GPT-4 more secure and more aligned. GPT-4 is 82% less likely to respond to requests for disallowed content and 40% more likely to produce factual responses than GPT-3.5 in our internal evaluations”, they comment. Of course GPT-4 is not perfect and OpenAI acknowledges this in its report.

What the future holds for GPT-4: pros and cons

When looking ahead for GPT-4 you have to focus on the short term of 2023 rather than the next 5-10 years. This is because things can quickly change course in the field of artificial intelligence that it is even absurd to talk about 2 years from now.

OpenAI will be commercialized on a large scale

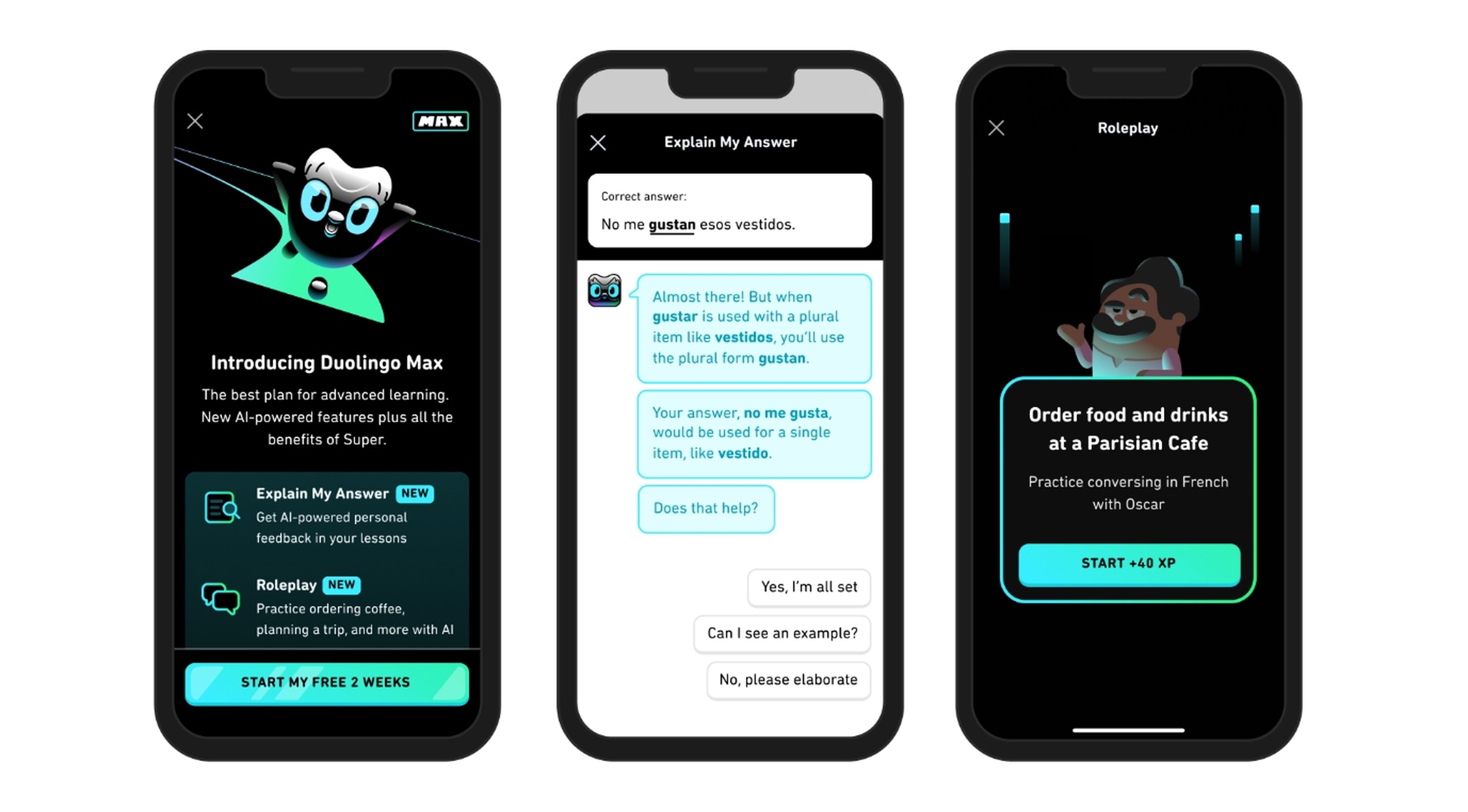

Many believe that OpenAI will earn most of its revenue by licensing its technologies to other companies to create their own custom chatbots instead of the subscription model. This is something that is already being seen with GPT-4 and some companies like Duolingo that already integrate its model (Duolingo Max).

A new power race begins

Microsoft’s Bing is the first to integrate GPT models into search, kicking off a new race in search that Google has dominated from the start. Now it will be necessary to see Google if with the help of Bard —which is already opening to the public— it manages to avoid these stones along the way.

Problems for those who feed on Internet clicks

There will also be questions about attribution and the impact it will have on website traffic, as if these tools can effectively summarize a full response to a query, Users will not need to click through to the website(s) from which the information came.

Regulators take note

2023 may finally be the year regulators take note of the AI discontinuation. Policies may eventually be created that create a framework for establishing security and copyright laws for these AI models.

“I don’t think that GPT-4 is a trigger for the regulation of information. It is one more step, raising awareness, but in a very short time we will have many similar Transformers with different training, which will make their use much more natural.”Nicolás Franco Cerame, UPM Professor and Development Director at mrHouston, opines for Computer Hoy.

Fake news will increase

One of the negative parts of the growth of GPT models is that bad actors can spread fake news much faster than before. Since these tools seek to appear to human speech, it will be difficult to distinguish it.

“It is important to be aware of the challenges and risks associated with the use of this technology, such as data bias, the generation of false or misleading content, and job replacement. To maximize benefits, it is critical to address these issues and ensure a ethical and responsible use of AI”concludes Pérez Suay.

[ad_2]

Leave a Reply